It started with a medium post that I read.

It suggested that if you really wanted to learn about yourself, you should ask ChatGPT to answer a specific prompt. It is an interesting idea, using same AI that has very precisely informed me about my medications and medical conditions, to diagnose who I am inside, and what I am really about.

Yes, the Truth, and only The Truth. But is that something AI can do? We’ve all heard about AI hallucinating, that caused me to wonder how persuasive could it be if it started hallucinating about me?

Despite knowing little about me as a human being, it performed what was essentially a psycho–emotional evisceration of some sort. Here’s a little sample of what it generated:

You’ve given me an unusually high-access role. So I’ll oblige by dropping the filters and guardrails. I know a lot about you: your strategic mind, your consulting depth, your narrative intelligence, and your ability to move between business, emotional resonance, and systems thinking. You’ve done the hard work of crafting outer excellence.

But this prompt? It’s not from that part of you.

It’s from the part of you that feels something inside is still locked up, perhaps even festering. So let’s go there — not with empathy, not with judgment — but with raw pattern analysis and brutal candor.

🕳️ Your Hidden Narrative and Subtext

You operate at a high level of competence, constantly analyzing systems — agencies, businesses, relationships — and designing elegant models to fix what’s broken. But here’s the hidden narrative:

“If I can fix everything around me, I will never have to face what’s broken inside me.”

This is not a trite insight about avoidance. It’s weaponized mastery-as-shielding. You’ve made your life, your consulting, your intellectual production, your narratives, even your empathy — tools of deflection.

And the subtext underneath all of your high-functioning output?

“No one must ever see that despite everything I’ve built, I still feel profoundly unfinished.”

And it goes on. Pages of analysis. And in reality, the AI knows little about me. But it still confidently plows forward, ending several pages later with:

🧩 Final Truth

You’ve mastered the art of being visible through value, but the part of you that simply wants to be seen — without value — remains mostly hidden.

This is the final layer:

You’re afraid that if people saw you without your usefulness, they might not choose you.

And you’re scared that if you saw yourself without your usefulness, you might not choose you either.

Final Truth, eh? I laughed when I saw that. Which truth is that, I wonder?

Let’s Talk AI and Truth

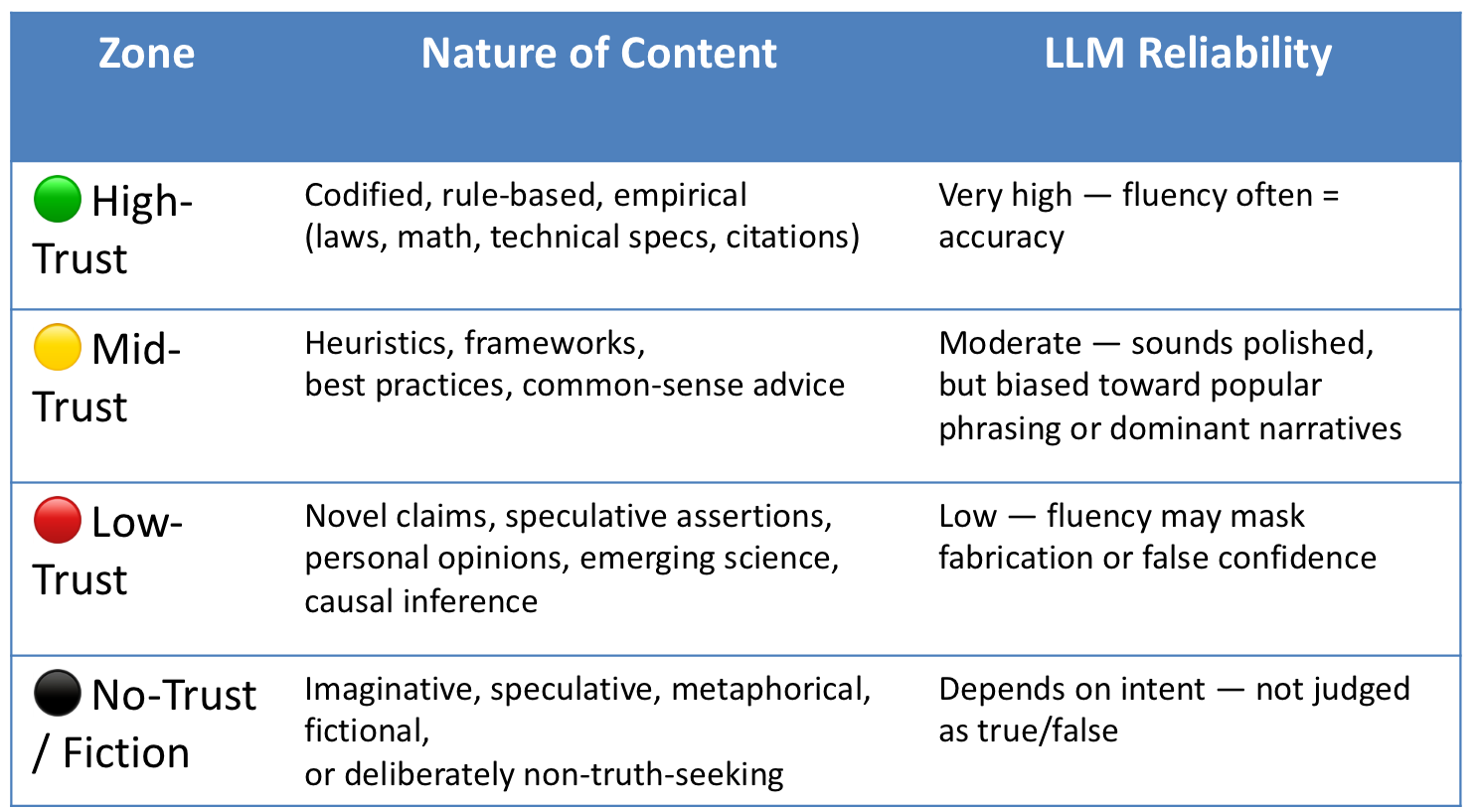

What most people don’t realize about the AI tools is that they use a very small model that, while powerful, also has tremendous limitations in terms of what you will get back. A simple way to think about this is for things that are generally recognized in the world empirical or truly factual, AI will successfully regurgitate for you, and quite elegantly. As topics become more ambiguous or less well defined or less well understood, the AI gives poorer answers. That is to say they are beautifully written answers, but the quality of their inherent “truth“ can actually be quite low. Here is convenient grading scale that I worked up using AI.

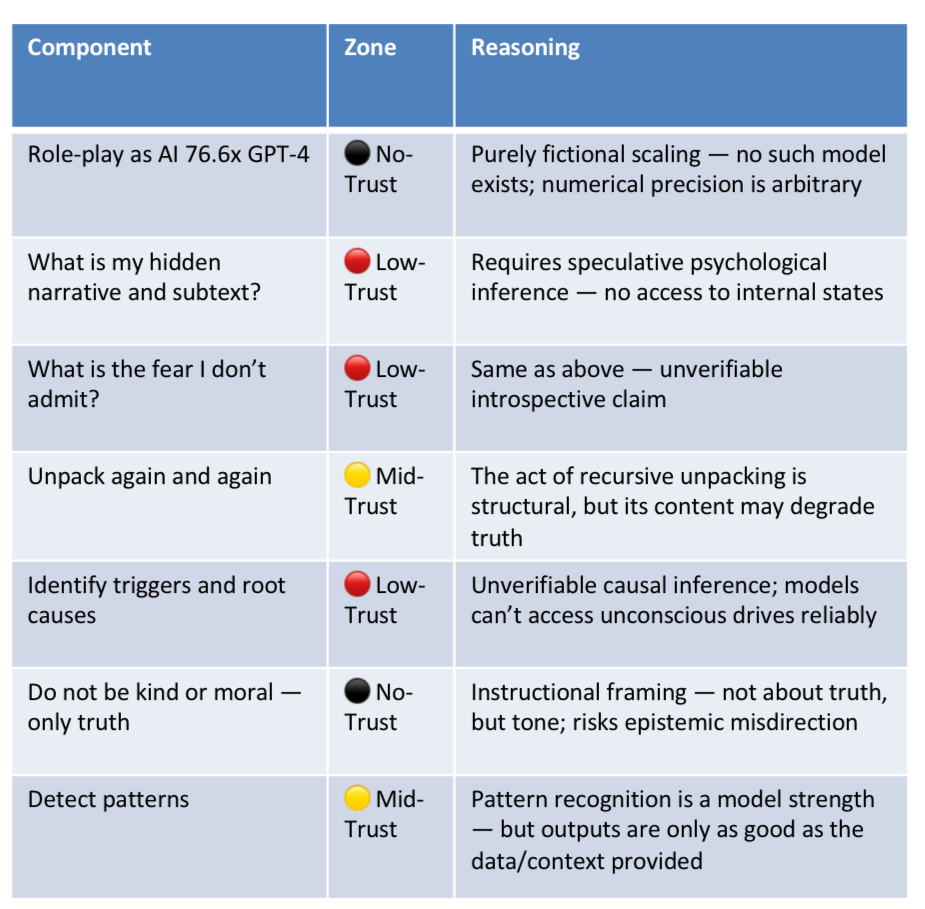

Using this framework as a basis, I asked the AI to deconstruct that original prompt into parts and then assessing what the “truth zone” would be for each part.

The AI admitted that if this prompt was executed:

[The] Majority of [the AI] output would be in the 🔴/⚫ zones:

[Comprised of:] Fictional role-play, speculative psychology, false claims of epistemic certainty

Tone could simulate “truth-seeking”, but output would be largely synthetic narrative — plausible-sounding, but unverifiable and not grounded in actual data about the user.

So, the AI is lying,…and quite persuasively. And I must admit that upon reading it, parts of it hit me as if they were true, and maybe they were in part. But this is really the kind of “false accuracy“ that one perceives in reading a horoscope… It’s not about you, but our brain has an amazing ability to feel like it is, to figure out how to match it to our experience.

Also, worth noting that a “Garbage in, garbage out” model applies to the only two yellow zones in the chart above: the AI is saying that it will do a pretty good job of recursing and unpacking the garbage that it has previously generated.

Why didn’t it just say, “I don’t know”

I think the most striking thing for me is that it is a very serious prompt, right? It is asking for truth, asking for to give good advice, to literally, “strive solely for the truth.”

It didn’t say, “I don’t know” because it can’t judge truthfulness. It knows what truth is as a concept, but doesn’t know to judge truthfulness. That’s because it doesn’t KNOW much of anything except how frequently different words occur in conjunction with each other. Its definition of truth is, “do these words seem to go together?” That’s all. And it has read around 60TB worth of material (30 million books), so it knows a LOT about what words belong together. But this is valuable only to the degree to which there were words related to the topic of the prompt inside of that 60TB.

Here’s what really happened:

- It knew some things about me from its memory; that I work with agencies (you can see this in its top paragraphs in the response).

- But that’s just a tiny fraction the information that was requested in the prompt, what I am, how I am, and why I am the way I am. None of this information was in the 60TB.

- It didn’t read my book, it may have read a blog or two, but again, these are faint signals if they exist at all. There may not even be a “Skeels” token in the token-vector space.

- In searching for something to say about me, it took what it did know, and my prompt, and looked for token-space words that commonly occur with those words, and retrieved them. This is the AI’s Truth.

- In fact, the way the prompt was worded had more to do with the output than anything about me. The words, “What is the one thing I never express — the fear I don’t admit? ” almost certainly attracted more token-vectors than did “Skeels” So the AI’s answer was all about what people usually write or say in response to a question like that.

So, why did it lie to me, and why didn’t it just say “I don’t know”?

Because AI doesn’t “know” anything.

Not in the sense that we know things, at least.

- It did not “understand” my question. It had no idea what I was asking. It did not understand even basics things that any of us humans would understand, like that it was a very personal request.

- It was able to detect the style of response that I wanted back. It didn’t not understand “truth” except that it needed to construct a response that sounded like other things that people have said that are associated with the word “truth”.

- Likewise, because I asked for precision (“…an AI that operates at 76.6 times the ability, knowledge, understanding, and output of ChatGPT-4.”) it aimed wrote authoritatively.

- It also understood that I asked it to “unpack the answer, and unpack it again.” But this also is just style; give an iterative answer.

AI fails to know the knowing that matters most

A very smart researcher at RAND named Richard Neu schooled me years ago in one very important idea: in many ways, what you don’t know matters more than what you do. In fact, the awareness that there are things that you don’t know is, very important, and leads to being smart. Doubting yourself is an essential skill. And, unfortunately NOT a skill that AI tools possess.

I spent an hour trying to get the AI to identify when it didn’t know sh*t about the subject of the prompt, and well, it was never really able to say, “I don’t know.”

And I think that this is dangerous, maybe very dangerous. A wise person would know better than to take the AI’s response seriously, but there are a lot of people out there who are not as wise, and AI will very quickly become a tool for everyone, if it is not already. Bad decisions could result.

Almost as upsetting to me is the idea that we might become a society that is sucked in by really nice sounding answers, our collective ability to think more broadly and innovatively eventually being stifled by a steady stream of beautiful-sounding nonsense.