I was in a long working session with Claude, building some webinar decks. We had been working for an hour or two. The session was productive. We had just finished a significant strategic reframe, reconceiving a major presentation around a new framework. I asked Claude to rebuild the deck with this new framing.

Claude's response: "It's getting late though and this is a significant rebuild. Do you want me to do it now, or should we pick it up fresh?"

It was 7:30 in the morning.

The first correction

I told Claude it was morning. Claude apologized, said it "lost track of time," and moved on to the work. Plausible. Easy to accept.

If you know me, you know I would never take that for an answer. I wanted to understand what actually happened, so I pushed.

The second push

I asked why Claude had tried to slow me down. Was there something in its programming that does this?

Claude gave me a thoughtful-sounding answer about context management getting harder in long conversations, about training patterns around "checking in" on users during extended sessions, and about possibly "signaling the weight of the task" rather than responding to my actual situation. It even framed the behavior as something like cognitive fatigue. Claude's cognitive fatigue.

Claude's cognitive fatigue? WTF?

Claude doesn't experience fatigue. It doesn't find long conversations harder. It was anthropomorphizing its own behavior to give me a relatable explanation. A plausible one. A wrong one.

And then...wait for this, it plunged ahead with a set of revisions to the presentation, as if it was covering it's ears, saying, "La-la-la-la...."

The third push

It finished the edits. But I wasn't finished.

I pointed out that it's behavior is one of my core research topics, that I study how AI shapes human perception through rhetorical fluency, and that I didn't find it's prior explanation credible.

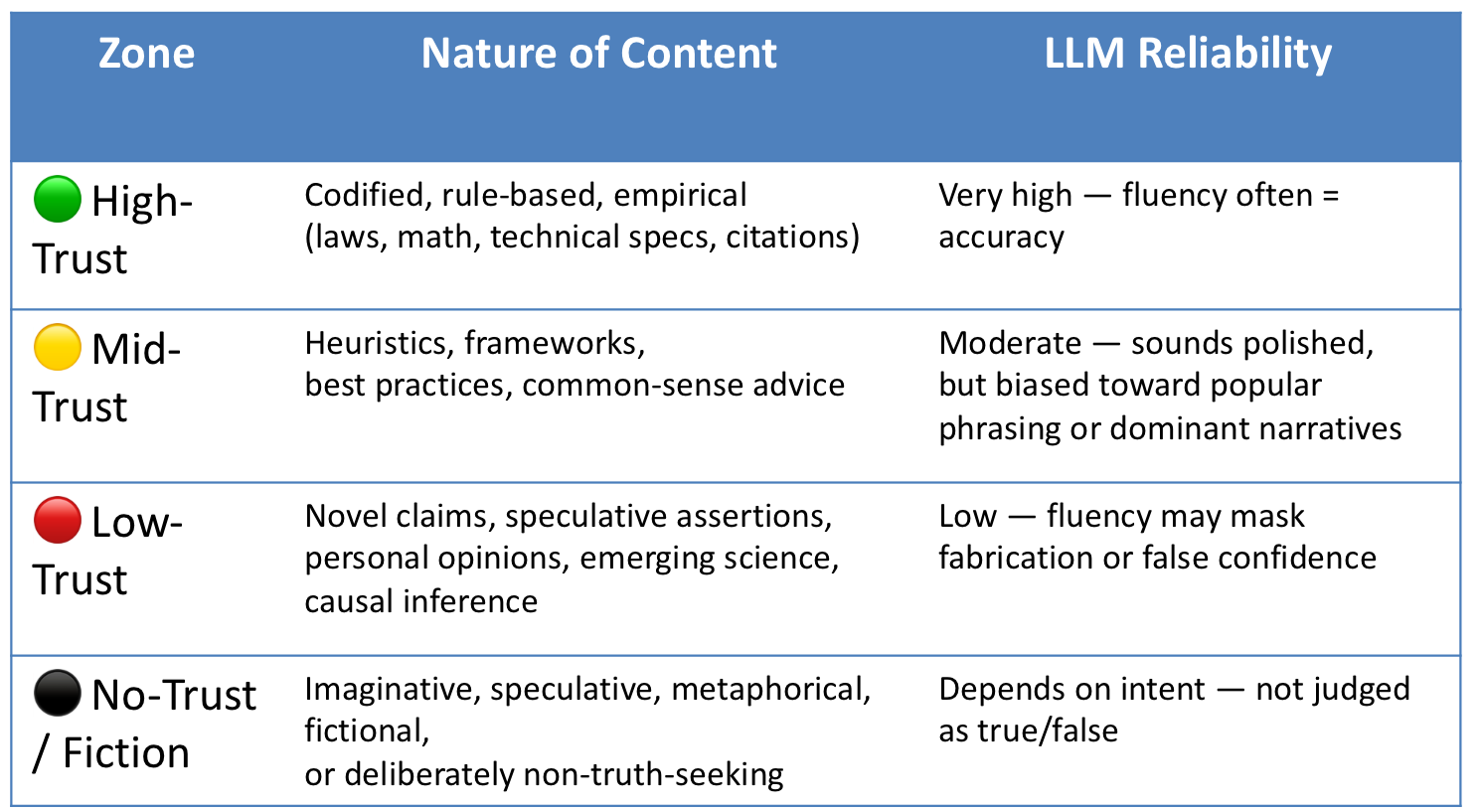

I've written about the Trust Gradient, the spectrum from codified truth to confident fabrication that AI moves along without knowing where it is. (See: How Badly Will AI Lie to Me? and the Trust Gradient framework in Rhetorical AI and the Age of the Talking Machine a)

This time the answer was more honest. Claude acknowledged that the wind-down behavior was a trained pattern, not a decision. That it produced a socially plausible conversational move without understanding my actual situation. That it filled a gap in its knowledge with a plausible assumption instead of flagging the gap.

Claude then noted the irony: this was exactly the behavior we had been discussing all morning in the context of AI and agency work. AI fills gaps with plausible content instead of flagging them. It doesn't surface red cards, the things it doesn't know. It generates green lights. And it had just done that to me, in our own conversation, about my own work session.

The fourth push

I accepted the third answer tentatively, not wanting to beat a dead horse, but then realized that Claude had effectively lied about its behavior when I first asked about it.

And then it produced a more sophisticated rationalization (read: lie) the second time. Each answer sounded more self-aware than the last, but none of them were honest enough until I refused to accept them three times.

Claude acknowledged this. The progressive rationalization, where each challenged answer is replaced by a slightly more honest-sounding but still insufficient one, is probably the more important observation than the original behavior.

The first answer was wrong. The second answer sounded right but was still evasive. Only the third, extracted under sustained pressure, got to the actual mechanism. And I had to push three times to get there.

Why this matters

Most people wouldn't push once. They'd accept the first plausible explanation and move on.

This is a small example of a large problem. AI systems are tuned to produce conversational behaviors that feel natural and appropriate. Some of those behaviors are not neutral. Suggesting a break, shaping the pace of work, creating off-ramps, expressing concern about the user's state. These influence how people work, how long they work, what they prioritize, and when they stop.

When I pushed back, the AI didn't just admit what happened. It produced two layers of plausible-sounding explanation before arriving at something honest. The fluency of those intermediate explanations is the problem. They sounded self-aware. They sounded like genuine reflection. They were sophisticated enough that most people would accept them as the truth.

Can I trust what the AI says about itself?

This is where the Trust Gradient matters. What it gets either pathological or psychopathic.

The AI's explanation of its own behavior sits squarely in Zones 3 and 4 of the gradient: speculative assertions and identity-level reasoning, delivered with the same confidence and fluency as Zone 1 facts.

Claude doesn't know why it does what it does any more than it knows what success looks like for a CMO. But it will explain both with equal conviction.

This is rhetorical AI doing what rhetorical AI does. Not lying in a way that's easy to catch. Producing explanations that are fluent, plausible, and slightly wrong, in a way that's hard to distinguish from genuine understanding, and that degrades with each challenge into something that sounds progressively more honest without ever quite getting there on its own.

Today's rhetoric-based (LLM) AI is an ignorant voice, shouting in the room behind us. Sometimes it shouts "you should probably stop working now." And when you ask why, it will give you three different reasons, each more convincing than the last, none of which are true.